This is an overview of a digital art project, that forced me to stray beyond p5.js 2d canvas and look to the GPU powered pixi.js library.

See the finished project

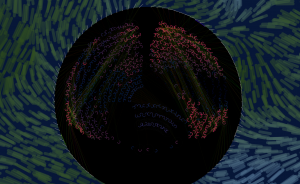

I wanted to create a work of digital art that represented cascades of neurons, simulating the flow of stimulation and inhibition that flows between neurons in a brain. The point was to show a sufficiently complex cascading and shifting effect (triggered by changes sensed in the world), and ask questions about how observing these patterns might suggest thought or inner experience in the observed mind, and how the biochemical processes might relate to some “inner” experience of the world.

Because P5.js is my goto visual library I started there. Each neuron is a js object, with a set of behaviours and visual rendering. Sorry, I am still using classic js functions as objects rather than classes. Old habits, unbroken. Each neuron holds an array of connections (dendrites) that are pointers to source neurons, and a weighting for either inhibition or excitation that modifies the strength of signal passed from an up-stream neuron.

In each frame, the neurons check each of its dendrite’s source neuron’s current value, multiply this by its own excitatory or inhibitory weight, and the neuron then aggregates all of these to decide whether it should itself fire.

Within the neuron various states and counters are updated that will be used in visualisations.

There are three visualisations:

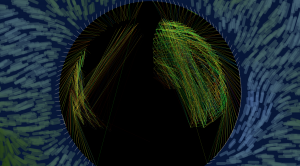

1. The dendrites are represented as straight lines with the hue reflecting the weighted value at a given instance. While the straight line is a very basic representation, the number of connections active at any point can give a spectacular overall effect.

2. The neuron body (effectively the axon hillock) is simply a circle that illuminates when fired, and with a simple fading ripple that propagates out after firing to give a visual echo of an otherwise fleeting event.

3. To enhance the visual effect of the firing neuron, a third visualisation reflect how active a neuron was been in the immediate past, and slowly fades to give a more lasting impression of where recent activity has been within the brain, while still reflecting current neurons.

In each of these effects the power of the visualisation comes from the cascade of firing and state of many neurons and dendrites. The balance between these three shifts over time (using the sine of an increasing angle, offset by a phase for each visual effect).

The quantity and position of neurons, and the number dendrite connections is controlled with a data object, layerSpec, which states the radius from a given point in the brain, and the angular spread of a row of a given number of neurons in that row. This gives invisible arcs as the scaffold on which neurons are set. Their spacing is set by how many neurons are in that row. The connections to upstream neurons are calculated based on how many neurons are in the preceding row, and how many dendrites are required.

The rows are not completely regular; a simple scattering effect diffuses the arrangement from appearing to be too ordered. The brain is arranged as two curved hemispheres to enhance the impression of a brain.

On the outside of the brain there are many sense cells, depicted as ‘hairs’ that move in an external current. This gives our brain some input from the outside world, ensuring that the brain’s stimulation is generative and responsive rather than predetermined.

The current that affects the sense hairs is a simple Perlin noise field. This is visualised with some “sea weed”, for want of a better analogy, that undulates in sympathy with the noise values to indicate to the observer what the world is doing.

The sense cells pass on their sensed changes to their underlying neurons, who, if they fire, pass on the changes.

Even though the visual effects drawn to the canvas in p5 are each very simple (lines, ellipses, arcs), the burden on the CPU becomes evident very quickly as a low frame rate, with hundreds of neurons making thousands of connections.

Using the GPU and webGL seems like the sensible way out of this, but this is new territory for me.

The p5, webGL renderer does not recognise all of the 2d primitives and controls. The 3d primitives render very quickly, and allow for thousands of moving objects but these are limiting and I really want the flat 2d canvas, with shapes and effects that are hard to achieve with 3d shapes. But when I attempt to, for example, build arc’s out of 2d triangle strips, (triangle being an available 2d primitive) my speed advantage is lost because the strip calculation is done largely back in the CPU, and I have lost my GPU advantage.

Not wishing to enter the unexplored world of three.js (and still wanting to stick with 2D) I came across pixi.js. Pixi lets you take advantage of gpu for rendering 2d. It is especially tuned for handling sprites, but because I want to use geometric objects, I used Pixi’s 2d primitives, getting as close as possible to my original p5 version.

While all the control happens in p5 at cpu speed, the shapes, once defined in pixi, can be updated very fast as long as you are just applying transforms to them and not changing the inherent geometry. So, for example, changing the angles of an arc involves completely redefining its shape. This is slow to do in the render cycle, as the shape is effectively discarded and rebuilt. However, translations, scaling, rotation, tinting and fading are no problem, as each is just one instruction to the GPU which does the hard work very quickly.

So it is likely that I got a 4-10x speed up on the performance compared with pure P5 in the canvas.

The whole sketch is rendered in a PIXI stage, rather than a p5 canvas. This took some adjustment and learning. While in p5 I tend to build my whole visual on the fly every frame for each object, in Pixi, you build your shape, add it to the stage in setup, and then use the render loop to modify position, rotation, shade, etc. I kept p5 handy as I am so familiar with its library of non-visual functions.

I am still hitting render limitations with frame rate down to 20 or lower when I hit around 1000 neurons with 10,000 connections between them. I think any further speed up would need shaders or implementing in three.js. Both of these are shifts in thinking and learning curves i am not ready for just yet, but I think it is inevitable that I will have to take the leap soon.

This Pixi.js tutorial from KittyKatAttack was amazingly helpful. It has been expanded into a full book.

No comments yet.